Regulatory Validation Framework for Clinical Trial AI

Co-Development Working Session

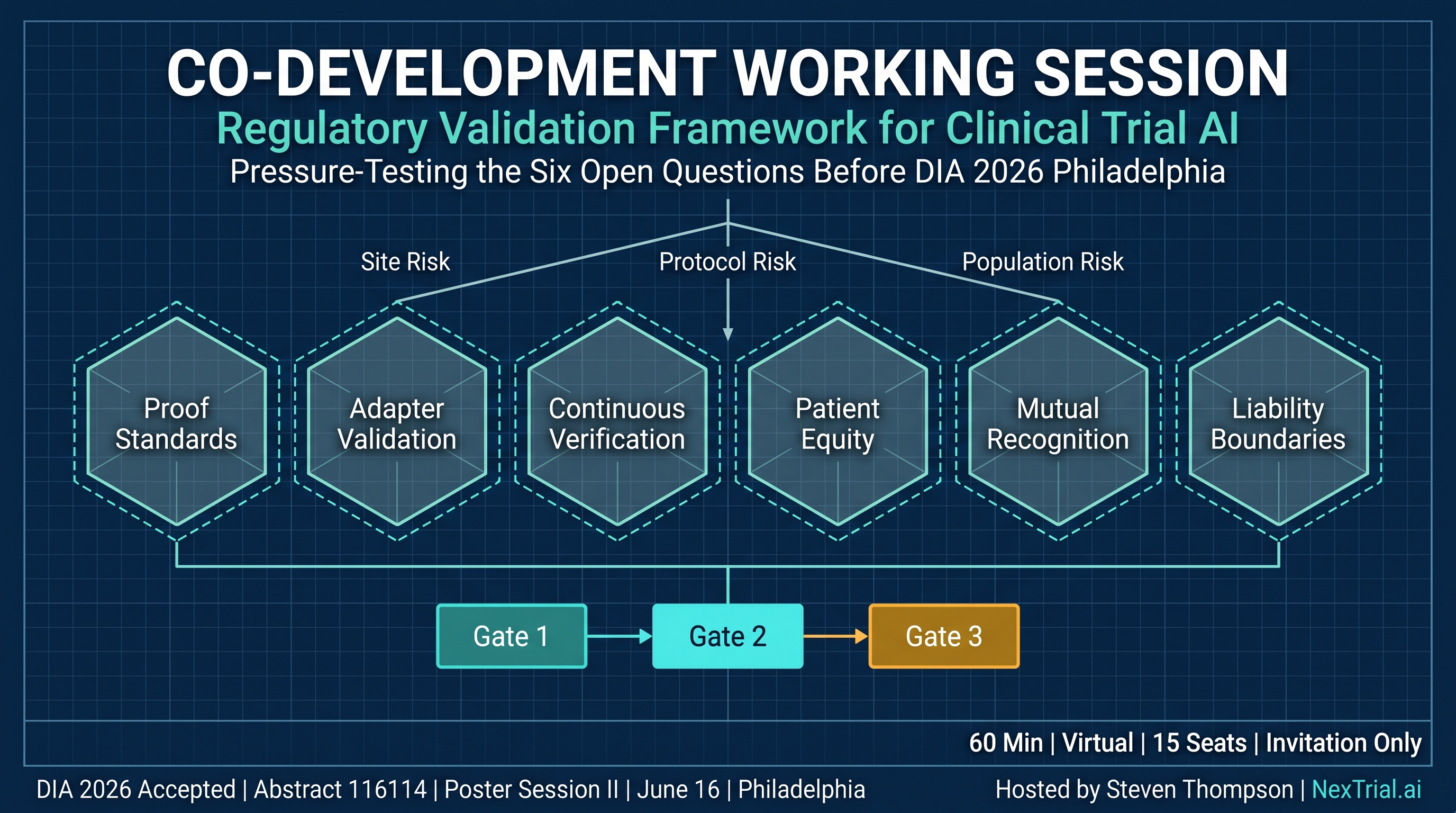

Pressure-Testing the Six Open Questions Before DIA 2026 Philadelphia

Event Details

Why This Session Exists

A regulatory validation framework for AI systems across the clinical trial lifecycle was published in March 2026 — the first of its kind mapped simultaneously to FDA, ANVISA, CDSCO, and the EU AI Act.

Since publication:

- •The framework was accepted for poster presentation at DIA 2026 Global Annual Meeting (Abstract ID 116114, Poster Session II, June 16, Pennsylvania Convention Center, Philadelphia)

- •Professionals at IQVIA, AstraZeneca, Bristol Myers Squibb, Pfizer, and Tempus AI engaged with the framework

- •A senior GCP/GVP consultant publicly challenged eight specific points against the EU AI Act and GxP principles — three identified genuine gaps now being addressed in version 2

- •21 professionals saved the framework for reference; 13 new followers were gained from a single publication post

This is not a presentation. This is a working session. The output directly informs framework version 2, the DIA 2026 poster, and a submission to the Conference for AI Scientists (CAISc 2026).

What We Will Work On

Three Acknowledged Gaps (v2 Priorities)

Risk Management Methodology (EU AI Act Article 9)

The published framework verifies outputs but does not govern the prior question: should this site-protocol-population combination be activated? A pre-verification risk management layer has been architected since publication — three dimensions: site risk, protocol risk, population risk — aligned with ICH E6(R3).

Data Governance (EU AI Act Article 10)

Data provenance, preprocessing documentation, and representativeness justification need to be addressed in v2.

Human Oversight Effectiveness

Can the framework's three PI attestation levels (Review, Independent Verification, Clinical Judgment) prevent rubber-stamping in practice? v2 will add mandatory escalation triggers and override rate monitoring.

Six Open Questions

Proof Certificate Standards

What should a regulatory-grade proof certificate contain?

Adapter Validation

How to validate jurisdiction adapter updates without regression?

Continuous Verification

What re-verification frequency for continuously operating AI?

Patient Equity

How to measure and mitigate bias in physics-informed predictive models?

Mutual Recognition

Cross-jurisdictional acceptance of proof certificates?

Liability Boundaries

Liability allocation when AI passes all gates and the PI attests?

Session Structure

Context setting — where the framework stands, what's been challenged, what's accepted at DIA 2026

Rapid-fire Mentimeter polls — all six questions, establishing baseline positions

Deep dives on the three questions with highest divergence — 10 minutes each

Version 2 priority ranking — what goes in, ranked by impact

Next steps — who contributes to v2, acknowledgments, DIA Philadelphia preview

Ground Rules

Critique is the point. Disagreement is productive. Every perspective is documented. The output of this session becomes part of the framework.

Who Should Be in the Room

The Framework

The full published framework — 11 sections, 4 regulatory mapping tables, 20 references, and 6 open questions — is available as the required pre-read for all participants.

Read the Published FrameworkOutput and Next Steps

The working session output feeds three deliverables:

Framework v2

Published on Substack with working session insights integrated, gap closures addressed, and contributor acknowledgments. Target: late May 2026.

DIA 2026 Poster

Poster Session II, Tuesday June 16, 11:30 AM–1:30 PM, Pennsylvania Convention Center, Philadelphia. The poster will reference the co-development process and v2 refinements.

CAISc 2026 Submission

Conference for AI Scientists (July 24–25, 2026). The framework was co-developed with AI as a primary research participant. Working session findings become real-world validation data.

About the Host

Steven Thompson

Founder and CEO of NexTrial.ai, building AI orchestration infrastructure for clinical trials across the US, Brazil, and India. 20+ years in regulated environments including Biogen, Takeda, NuBank, and ADM. Author of the first regulatory validation framework for AI systems across the clinical trial lifecycle.

DIA 2026 Poster Presenter · Abstract ID 116114This session is invitation only. If you work in regulatory affairs, clinical operations, bioethics, or formal methods and want to contribute to the framework — request your invitation via the LinkedIn event or reach out directly.